Risk, Uncertainty

Basic Olicognograph: Risks Approaches

Risks on my Pocket ( God my portefolio !)

Risks are quantitativelly well treated in mathematical formulation, of newtoninan mechanics; once the concept seen objective or easy to merge, comparing differences in probabilities of success or failure. But putting so mechanical formula on one side because pretending functions of average distribution the most consistant or, on the other side, a principle of ergodicity, does not reflect much of patterned reality. We imagine that a good complex symbol contradictory unit (filled eventually with asymetric colors) could be symbolized by "yan-ying" picture; since you have most of quantitative behavior, if exploring the fields: exact when tending to the tip of the claw or probability when embrassing to the opposite as well a the "bifurcated change", when crossing the border of a color to the other, either local either general. Too you can examine many common geometric sense of concept as existing on decision.

"Knight defined risk as randomness with known probabilities whereas uncertainty is randomness with unknown probabilities. Bernoulli in 1738 discusses the famous St. Petersburg paradox and that risky decisions could be assessed on the basis of expected utility (as later explained). Fisher in 1906 suggested to measure Economic risk by variance, Tobin related investment portfolio risks to variance of returns". Then "originally in most modern economics, with Arrow, risks was based on expected returns. Allais's paradow, critic to this was summarized as: "more the risk is low, more we are adverse to it". Since the sixties plenty of economic investigation have been concerned by risk and randomness in all levels of decisions (financial markets, insurrance, investments, etc.)".

"In 'advanced' Economics risks have been of special concern like with:

- Pricing models: model risk is defined as “the risk arising from the use of a model which cannot accurately evaluate market prices, or which is not a main stream model in the market.”

- In risk measurement models, model risk is defined as“the risk of not accurately estimating the probability of future losses.”

Sources of modelling risks in pricing models (you may find analogies for others registers) include:

- Use of wrong assumptions (leading to mispecification),

- Errors in estimations of parameters (leading to biases),

- Errors resulting from discretization (disqualifying the criteria of decision),

- Errors in market data (mis-representing your population concerned by the problem).

On the other hand, sources of model risk in risk measurement models include:

- The difference between assumed and actual distribution out dating your conclusions mistaking your audience, and

- Errors in the logical framework of the model.

Now, out the purse's question, plenty of models can be derived just extending the concept of price of value in some primary instinct sense not just money involved". "In Economic analysis of value of information under uncertainty, Blackwell showed that the value of information is always positive for expected utility preferences. As with analysis of choice under uncertainty in general, most discussion of the value of information has focused either on choice from a finite set of alternatives or on cases where the decision variable is a scalar. Sandmo develop a path breaking extension of the portfolio problem in considering the production choices of a risk-averse firm facing price uncertainty. Graham proposed the idea of margin of safety as a measure of risk and also recommended (portfolio) diversification to reduce risks. Exponents of the value based investment methodology include renowned investors. However Markowitz was the first to formalise portfolio risk, diversification and asset selection in a financial mathematics consistent framework. All that was needed were asset return means, variances and covariances".

More fundamentally (theory of information) "Kullback Leibler discrepancy is now suggested as a measure of risk based on differences in knowledge. Defining the knowledge by the ability to predict consequences accurately and assess predictive accuracy operationally using scoring rules for probability forecaster. The Log scoring rule turns out to be a way of assessing differences in knowledge, precisely for constant absolute risk adverse. The multiple-bounded uncertainty choice (MBUC) value elicitation method allows respondents to indicate qualitative levels of uncertainty, as opposed to a simple yes or no, across a range of prices".

Now our philosophy or the observation is that many concepts of theoretical economics, despite being unbelievable when considering the complexity of realities; but the need for wise "simplexification" makes us observe:

- There is in mathematical forms reasons, that can apply to many different registers providing concepts interpreted in the nature of the register. Practically, the question then turn to if the best is to learn and be able to conduct with same formal patterns flexibly interpretations adapted to different registers, since different registers have to be mixed and structured for a proper care of a complex problem (any brain is enough developed but poorly trained for making a difference between analogies, see what it has underview and know the difference with demonstration (even when not the one that will make it, the point being with using the results,

- So to observe that what remain to specialists to make prevail the superiority of their register in human policies and mostly concluding about the lack of rationality of others when their predictions fail for most of explaining them by kinds of arguments that look like more to come from nearby registers, as geopolitics when economist, economic when ecologist, domestic when sociologist and so on, for all things relevant to our human concern.

More or less Really Risky Uncertainties

"Risks on health and safety perspective is simplified to the probability of harmful consequences or expected losses (of lives, people injured, property, livelihoods, economic activity disrupted or environment damaged) resulting from interactions between natural or human-induced hazards and vulnerable conditions. Risk is conventionally expressed by the equation Risk = Hazard + Vulnerability".

"In investments (like on infrastructures) the choice problem concerns the investment of an amount of money in a safe option and a risky option when there is a “global risk” of losing all earnings, from both options. Including any return from the risky option. Global risk can reduce the amount invested in the risky option. This result cannot be explained by Classical Expected Utility or by its main contenders Rank-Dependent Utility and Cumulative Prospect Theory. An explanationcould be to take into emotions account" (hence enaction after cognitive arts).

In assessment technicalities, there are like time's discounted rates, actualized value, cost of money, proper schedule of flows, interest rates, sources of costs saving, capital replacement and many other methods to care about. Mind that they will be positivelly completed by the way you can care with strange things from reality as well as your ability to explain this complicated things and to include practical involment. That is the complicated network of social values.

Formally, "Find the 'best' values of a decision vector can be formulated as a stochastic optimization problem, with 2 basic difficulties. First, it is assumed that the probability distribution is known. In real life applications the probability distribution is never known exactly. In some cases it can be estimated from historical data by statistical techniques. However, in many cases the probability distribution neither can be estimated accurately nor remains constant. Even worse, quite often one subjectively assigns certain weights (probabilities) to a finite number of possible realizations (called scenarii) of the uncertain data. The second basic question is why we want to optimize the expected value of the random outcome. In some situations the same decisions under similar conditions are made repeatedly over a certain period of time. In such cases one can justify optimization of the expected value by arguing that, by the Law of Large Numbers, it gives an optimal decision on average. However, because of the variability of the data, the average of the first few results may be very bad. For these reasons, quantitative models of risk and risk aversion are needed".

Some risks and uncertainty are already methodologically well known, like risk rates, conventional (or "state of the art" ratio). Of course technically and technologically, that is according real science and known margins for cover of risks. Making that your bridge will not fall easily, dam will not fill fast, towers will not burn just because of only one cigarette, houses will not be torned down into pieces by a small tornado.

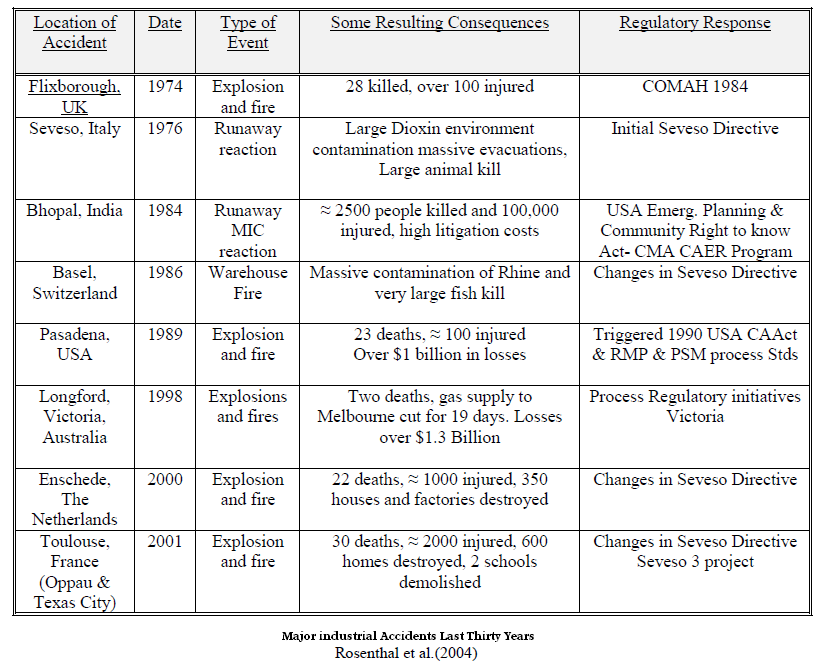

Byside of technological risks (for some technologists called cindynic, a sort of systemic of technological risks), industrial and large scales prossess including human made and natural disasters vulnerability of structure; there is all an art of balance between knowledge, studies and lawful regulations. For example: "the process of classification of the toxic properties of hazardous materials is not straightforward. Even when the documentation is clear and comprehensive it can take decades before the authorities decide to take action. Moreover, a classification is always on trial because new scientific knowledge can lead to reclassification. It is not easy to understand the politics of the regulatory game because issues other than scientific results set the agenda. Further, the economic consequences for the technological industry and society are always taken into consideration before risk reduction strategies for hazardous materials are decided and implemented".

Subjectivity or sensibility is far from excluded, regulations have often been ratified when, almost accidents, not so relevant in terms of death at work places have happened.

Risks on my Environment (Go: NIMBY!)

"There is a growing awareness that ecological risk assessments (ERAs) could be improved if they made better use of ecological information. In particular, landscape features that determine the quality of wildlife habitat can have a profound influence on the estimated exposure to stressors incurred by animals when they occupy a particular area. Various approaches to characterizing the quality of habitat for a given species have existed for some time. These approaches fall into three generalized categories: 1) entirely qualitative as in suitable or unsuitable, 2) semiquantitative as in formalized habitat suitability index models, or 3) highly quantitative site-specific characterization of population demographic data such as matrix population models or multiple regression models.

Such information can be used to generate spatially explicit estimates of exposure to chemicals or other environmental stressors, e.g., invasive species, physical perturbation, that take into account the magnitude of co-occurrence of the animals and stressors as they forage across a landscape".

"But also key processes of speciation, endemism, coexistence, extinction, and differential vulnerability of taxa and habitats are not adequately understood as:

- Diversity–stability: Predicts a linear relationship in which the rate of ecosystem processes increases as the number of species increases. Rivet–Popper: Predicts apositive nonlinear relationship and assumes that all species are equallyimportant – the deletion of species gradually weakens the system, andbeyond some threshold number may cause the ecosystem to collapse.

- Redundancy: Considers most species as superfluous, only functional groups areimportant; those species within the same functional group are more expendablerelative to one another than species without functional analogues.

- Idiosyncratic: Acknowledges none or an indeterminate relationship between species diversity and ecosystem function; the identity and the order ofdeletion of species will affect ecosystem function".